![saas[1]](https://sharepointsamurai.files.wordpress.com/2014/05/saas1.jpg?w=300&h=195)

The primary purpose of a Private Cloud Infrastructure as a Service capability is to provide well managed infrastructure services to the Platform and Software Layers. To achieve this, the Infrastructure Layer, highlighted in the Private Cloud Reference Model diagram below, includes five capabilities.

Figure 1: Private Cloud Reference Model

This document describes these Infrastructure Layer capabilities and the impact of Private Cloud Infrastructure as a Service (IaaS) patterns on their planning and design. These patterns are defined in the Private Cloud Principles, Concepts, and Patterns document and are summarized here:

- Resource Pooling: Divides resources into partitions for management purposes.

- Physical Fault Domain: The group of physical resources dependent on a single point of failure such as an Uninterruptible Power Supply (UPS).

- Upgrade Domain: A group of resources upgraded as a single unit.

- Reserve Capacity: Unallocated resources, which take over service in the event of a failed Physical Fault Domain.

- Scale Unit: A collection of resources treated as a single unit of additional capacity.

- Capacity Plan: A model that enables a private cloud to deliver the perception of infinite capacity.

- Health Model: Defines how a service or system may remain healthy.

- Service Class: Defines services delivered by Infrastructure as a Service.

- Cost Model: The financial breakdown of a private cloud and its services.

The Health Model, Service Class, and Scale Unit patterns directly affect Infrastructure and are detailed in the relevant sections later. Conversely, private cloud infrastructure design directly affects Physical Fault Domains, Upgrade Domains, and the Cost Model. These relationships are shown in Figure 2 below.

Figure 2: Infrastructure Relationship with Patterns

Background

The private cloud principles “perception of continuous availability” and “resiliency over redundancy mindset” are designed to make a private cloud architect think differently.

Traditional solutions rely heavily on redundancy to achieve high availability and avoid failure. But redundancy at the facility (power) and infrastructure (network, server, and storage) layers is very costly. Modern cloud applications are designed with a different, holistic approach to achieving availability. This means shifting focus from building redundancy into the facility and physical infrastructure to engineering the entire solution to handle failures — eliminating them, or at least minimizing their impact.

This approach to availability relies on resilience as well as redundancy. Resilience means rapid, and ideally automatic, recovery from a failure. Redundancy is typically achieved at the application level. (A non-cloud example is Active Directory®, where redundancy is achieved by providing more domain controllers than is needed to handle the load.)

Customer interest in cost reduction will help drive adoption of this approach over the medium term. Removing power redundancy from racks or co-location rooms has a big impact on operational expenses, but this typically occurs only when the hosted application doesn’t have to be highly available, or when high availability is achieved through redundancy at the application layer – for example, Active Directory replication, or application layer mirroring such as SQL Server™ mirroring. Combining reductions in physical redundancy with virtualization results in lower capital and operational expenditure compared to a highly redundant infrastructure.

Applications that depend on a highly available infrastructure will not achieve their Service-Level Agreement (SLA) when placed on the type of infrastructure defined earlier. Customers are therefore likely to develop two environments when designing their private cloud: a standard environment with reduced facility and infrastructure redundancy, and a high-availability environment with traditional levels of redundancy.

| No power redundancy to the rack (for example one in-rack UPS) |

Redundant power to each server |

| No network redundancy to the servers (redundant core network) |

Redundant network connections to each server |

| Local storage, possibly redundant storage, and storage network |

Redundant storage presented to each server |

| Ideally no migration or possibly quick migration |

Live Migration |

These two environments allow a Architect to differentiate service classifications from a high-availability perspective. The standard environment is appropriate for stateless workloads; stateful workloads will require the high availability environments. Stateful and stateless machines are managed differently. Statefulness will likely appear as a characteristic of the service classifications.

Stateless workloads (web servers, for example) are typically redundant at the server level via a load-balanced farm. These servers could easily be hosted in the Standard Environment. If all stateless workloads had an automated build, the Standard Environment could do away with any form of VM migration – and simply deploy another VM after destroying the existing one, thereby saving the cost of shared storage.

Stateful workloads, on the other hand, require a specific management approach and impose higher costs on the consumer. Unless designed for high availability at the application level, they will require some form of redundancy in the infrastructure. Further, the High-Availability Environment requires Live Migration to enable maintenance of the underlying fabric and load balancing of the VMs.

Security

The number one concern of customers considering moving services to the cloud is security. Recent concerns expressed in the industry forums are all well founded and present reasons to think through the end to end scenarios and attack surfaces presented when deploying multiple services from various departments in an organization on a private cloud.

In a cloud-based platform, regardless of whether it is private or public cloud, customers will be working on an essentially virtualized environment. The platform or software will run on top of a shared physical infrastructure managed internally or by the service provider. The security architecture used by the applications will need to move up from the infrastructure to the platform and application layers. In private cloud security this will provide security in addition to the perimeter network.

Public cloud involves handing over control to a third party, sharing services with unrelated business entities or even competitors and requires a high degree of trust in the providers security model and practices. In many ways the security concerns of private cloud and similar those of self-hosted or outsourced datacenter however the move to a virtualized self-service service oriented paradigm inherent in private cloud computing introduces some additional security concerns.

First is the isolation of tenants from each other and the hosting infrastructure at both the compute and network layers. Virtualization is a part of any private cloud strategy and the security of this model is totally dependent on the ability to isolate one tenant from another and prevent the careless or malicious tenant from impacting the stability of the core infrastructure upon which all tenants rely.

Another concern is Authentication, Authorization and Auditing of access to the cloud services. Self-service implies that tenant administrators can initiate management processes and workflows where previously this was accomplished through IT. For any misconfiguration or excessive permissions granted to these users can impact the stability or security of the cloud solution.

Many private cloud security concerns are also shared by traditional datacenter environment which is not surprising since the private cloud is just an evolution of the traditional datacenter model. These include:

- Impact the confidentiality, integrity or availability through exploitation of software vulnerabilities.

- Unauthorized access due to weak or misconfiguration.

- Impact to confidentiality, integrity or availability by malicious code.

- Impact to confidentiality, integrity or availability of data.

- Compliance with internal or industry specific regulations and standards.

Secure Virtualization Platform

The biggest risk in running in a multi-tenant virtualized environment is that a tenant running services on the same physical infrastructure as you can break out of its isolating partition and impact the confidentiality, integrity or availability of your workload and data. Therefore the security in virtualization platform is key in the isolation and non-interference between the individual virtual machines running on the infrastructure.

Highly Automated Management, Monitoring and Reporting

Many management tasks involve multiple steps that must be completed in the proper sequence by multiple administrators across multiple systems. Any shortcuts, omissions or errors can leave assets vulnerable to unauthorized access or affect the reliability of components within a solution. By orchestrating discreet management and monitoring tasks into workflows that require proper authorization and approval greatly diminish the chance of mistakes that affect the security of the solution.

Authentication, Authorization and Auditing

Most organizations have a common capability for providing an overarching framework for authentication and access control and then a private cloud introduces all parts of hosting and hosted services that include the hosting infrastructure and the virtual machines workloads that run in that infrastructure. This framework must be designed and possibly extended to provide a single point of managing identities and credentials, authentication services and common security model for access to resources across the private cloud.

Multi-layer Security

Moving to a cloud-based platform requires a change in mind-set of developers and IT security professionals. Some of the risks of the public cloud are mitigated by using a private cloud architecture, however, the perimeter security protecting a private cloud should be seen as an addition to public cloud security practices, not an alternative. You cannot apply the traditional defense-in-depth security models directly to cloud computing, however you should still apply the principal of multiple layers of security. By taking a fresh look at security when you move to a cloud-based model, you should aim for a more secure system rather than accepting security that continues with the current levels.

Security Governance

Enterprise IT systems are now typically well regulated and controlled. The security risks are well documented and therefore proper processes are put in place to develop new applications and systems, or to provision them from 3rd party vendors. It is very unlikely that a department manager would be able to purchase and install software without approval from the IT department.

With public cloud systems and Web browser clients however, it is possible that individual department managers could bypass the IT department and provision public cloud-based software. Indeed, they might use free cloud storage systems as a convenient means to synchronize documents without even considering that they are using public cloud services. Public cloud systems might be appealing to a manager as they could very quickly provision a new system and remove what they might see as unnecessary bureaucracy. They may even be unaware of the security and compliance policies that are in place to protect the organization. In a cloud-based landscape, we must protect corporate systems and data from these unauthorized, untested systems.

Facilities

Facilities represent the physical components – buildings, racks, power, cooling, and physical interconnects – that house or support a private cloud. It is beyond the scope of this document to provide detailed guidance on facilities, but the private cloud principles affect facility design.

The definition of a Scale Unit impacts power, cooling, space, racking, and cabling requirements. The team that defines a Scale Unit should include personnel that design and manage these aspects of the facility in addition to the procurement, Capacity Planning, and Service Delivery teams. The following table lists some ramifications of Scale Unit size choices from a facilities perspective.

|

Benefit

|

Trade-off

|

Benefit

|

Trade-off

|

- Lower amount of physical labor needed to add a Scale Unit

|

- Complicates the Resource Pool, Fault Domain, and Reserve Capacity equation

- Inefficient

- Stranded power (un-utilized power)

- Un-utilized space

|

- Allocation of full facilities units (for example, UPS, Rack, and Co-location Room) is easy to cost and engineer

- Reduces under-utilization of power, cooling, and space

|

- Higher amount of labor to commission

|

Knowing how much power, cooling, and space each Scale Unit will consume enables the facilities team to perform effective Capacity Planning and the engineering team to effectively plan resources.

Compute, Network, and Storage Fabric

The term Fabric defines a collection of interconnected compute, network, and storage resources.

The concept of homogenous physical infrastructure, introduced in the Private Cloud Principles, Concepts, and Patterns guide, stipulates that all servers in a Resource Pool should be identical. Homogenizing the compute, storage, and network components in servers allows for predictable scale and performance. In other words, every server in a Resource Pool should have the same processor characteristics such as family (Intel/AMD), number of cores/CPUs, and generation (Xeon 2.6 Gigahertz (GHz)). The homogenized compute concept also stipulates that each server have the same amount of Random Access Memory (RAM) and the same number of connections to Resource Pool storage and networks. With these specifications met, any virtualized service could relocate from one failing or failed physical server to another physical server and continue to function identically.

Physical Server

The physical server hosts the hypervisor and provides access to the network and shared storage. In the Standard Environment, the facilities do not provide power redundancy, so the servers do not require dual power supplies.

Every server will be a member of a single compute Resource Pool and a single Physical Fault Domain. Assuming all servers are homogeneous (as recommended), they will all be members of a single Upgrade Domain.

Capacity Planning must be done for each server specification, as its size (CPU and RAM specification) will determine how many virtual machines it is able to host. This is covered in greater detail in the Private Cloud Planning Guide for Service Delivery.

Server specification selection impacts the Scale Unit, Cost Model, and service class. Scale Units have a finite amount of power and cooling, so server efficiency has an impact on a private cloud. It may be that all power in a Scale Unit is consumed before all physical space. The cost of servers impacts the Cost Model irrespective of whether this cost is passed onto the consumer. Selecting only small one-unit servers will limit the architect’s ability to define a range of service classifications. The server needs to accommodate the largest service classification after the parent partition and hypervisor consume their resources.

Microsoft research shows servers with processors one or two models behind the latest versions offer a better price, performance, and power consumption ratio than the newer processors.

The Private Cloud Reference Architecture dictates that the “concept of homogenization of physical infrastructure” be adopted for each Resource Pool. Server specifications (CPU, RAM) may vary between Resource Pools, but this complicates Fabric Management (defined in the Private Cloud Planning Guide for Systems Management), which spans Resource Pools and Capacity Planning, and may necessitate different service classes for each pool.

Delivering IaaS requires that the service is pre-defined and delivered consistently. To achieve consistent performance, the VMs must have equal resources available to them from each server, in other words, the same CPU cycles and RAM. If servers within a Resource Pool do not provide homogeneous performance and RAM, consistent performance cannot be guaranteed.

Absolute homogenization may be hard to maintain over the long term as server models may be discontinued by the vendor; therefore relationships between Resource Pools, Scale Units, and server model longevity must be considered carefully.

The following table lays out some of the benefits and trade-offs of homogeneous and heterogeneous Resource Pools.

|

Benefit

|

Trade-off

|

Benefit

|

Trade-off

|

- Predictable performance within a Resource Pool

- Guaranteed Live Migration across the fabric

|

- Reuse of existing equipment may not be possible

|

- Possible reuse of existing equipment

- Allows for a broader range of server classes

|

- VMs cannot be moved between Resource Pools

- More upfront work to make sure Live Migration will work appropriately

|

In addition, servers should support the following requirements to achieve an automated infrastructure and resiliency:

Automated Infrastructure

- Wake On Local Area Network

- Remote BIOS Upgrades/Configurations

- Boot from Flash

- Pre-Boot Execution Environment (PXE) for remote imaging

- Virtualization Support

- Data Execution and Prevention

- 64 bit CPUs

- Standard Environment: 2 Network adapters that support TCP offload (TOE)

- Management x 1

- Consumer x 1

- High-Availability Environment: 4 or 6 redundant network adapters that support TOE

- Management x 2: Could be teamed for redundancy

- Live Migration x 2: Could be teamed for redundancy

- Consumer x 2: Could be teamed for resiliency

- Standard Environment: Storage connections that meet the required service classification

- For Internet Small Computer System Interface (iSCSI), 1 x Hardware iSCSI initiators: Could use vendor-specific software to achieve resiliency

- For Fiber Channel, 1 x Fiber Channel host bus adapter (HBA): – Could use vendor-specific software to achieve resiliency

- High-Availability Environment: Redundant storage connections that meet the required service classification

- For iSCSI, 2 x Hardware iSCSI initiators: Could use vendor-specific software to achieve resiliency

- For Fiber Channel, 2x Fiber Channel HBAs: Could use vendor-specific software to achieve resiliency

To dynamically initiate remediation events in case of failure or impending failure of server components, each server is required to display warnings, errors, and state information for the following:

- CPU

- State (Busy/Ready)

- Utilization

- Heat

- Fans

- RAM

- Utilization

- Error-Correcting Code (ECC) Errors

- Storage

- Read/Write Failures

- Predictive Failures

- Network Interface Cards (NICs)

- Port State

- Send/Receive Errors

- Motherboard

- Power Supply

- State

- Active / Passive

- Power Output Variations

- Fans

Storage

To achieve the perception of infinite capacity, proactive Capacity Management must be performed, and storage capacity added ahead of demand. The amount of storage added as a single unit (a Storage Scale Unit) will depend on the rate of storage consumption, hardware vendor lead time, and the level of risk the business wishes to assume (that is, weighing remaining unallocated capacity against the possibility of exhausting all capacity). This is detailed in Private Cloud Planning Guide for Service Delivery.

Storage will be placed in Storage Resource Pools, from which it is automatically allocated to consumers. Though Resource Pools are not a new concept for Storage Area Networks (SANs), allowing the infrastructure to allocate storage on-demand based on policy may be a new approach for many organizations. Further, the SAN must present an application programming interface (API) to Fabric Management to allow automation of allocation and provisioning.

The storage provided within a private cloud must be consistent in performance and availability. This means the Input/output (I/O) Operations per Second (IOPS) cannot vary significantly. If there is a need to make different levels of storage performance available to users of a private cloud, it can be accomplished through multiple service classifications. A private cloud is intended, however, to provide a limited set of standardized services; therefore, variances should be carefully considered.

The cost of providing the storage within a private cloud should be clearly defined. This permits metering, and possibly allocation of costs to consumers. If different classes of storage are provided for different levels of performance, their costs should be differentiated. For example, if SAN is being used in an environment, it is possible to have storage tiers where faster Solid State Drives (SSD) are used for more critical workloads. Less-critical workloads can be placed on a Tier 2 Secure Attention Sequence (SAS), and even less-critical workloads on Tier 3 SATA drives.

The Private Cloud Reference Architecture assumes the storage arrays and the storage network are redundant, with no single point of failure beyond the array itself. In this regard, the storage array can be considered a Fault Domain.

The design should adopt some form of de-duplication technology to reduce storage consumption.

As the storage array is a single point of failure, it should display health information to the systems monitoring service to make sure that any outages and their impact are quickly identified. Providing snapshots and mirroring between arrays for continuity is beyond the scope of this guidance.

Physical Storage Switches

If a Architect follows the recommendation to allow any VM to execute on any server in a Resource Pool, Virtual Hard Disks (VHDs) should reside on a SAN. While it is possible to host VHDs locally, the guidance assumes that they are hosted on a SAN.

A key decision in private cloud design is whether to use iSCSI or Fiber Channel for storage. If iSCSI is utilized to house virtual workload storage, it is suggested that each virtualization host include iSCSI HBAs instead of standard NICs for performance reasons.

The purpose of a storage switch is to provide resilient and flexible connectivity between shared storage and physical servers. The storage switch must meet peak storage I/O requirements for the virtual services. In addition, the interconnect speeds between switches should be evaluated to determine the maximum throughput for switch-to-switch communications. This may limit the maximum number of hosts that can be placed on each switch.

While switch throughput is important, attention should also be paid to the number of available switch ports needed to support the physical virtualization hosts. Refer to the switch hardware vendor to make sure it meets these requirements.

Physical storage switch requirements include:

- Dedicated switch port on each switch for each host and storage processor connection. This is needed for redundancy and I/O optimization.

- iSCSI traffic separated from all other IP traffic, preferably on its own switched infrastructure or logically through a virtual local area network (VLAN) on a shared IP switch. This segregates data access from traditional network communications for host-to-host and workload operations and provides data security.

- Redundant power supplies and cooling fans increase the number of faults the storage switch can withstand.

- Programmatic interface to support firmware upgrades and configuration.

Physical Storage Subsystem

Stateless workloads can be hosted on Direct-Attached Storage (DAS) instead of SAN, driving down the cost of service. The downside is that Fabric Management has to handle transitioning active user connections between VMs homed on different hosts, as VM migration is impossible. This may mean tighter integration with the network than is specified in this document (in order to know when all connections to a VM have been abandoned or terminated before stopping the VM, for example).

SAN storage, while more expensive, provides advantages:

- The VM can be re-homed to other servers.

- Live Migration can be employed.

- Backup (of the VM) can occur out of band (for example, taking snapshots).

- Capacity can be increased almost limitlessly.

The logical storage configuration (or storage classification) should be designed to meet requirements in the following areas:

- Capacity: To provide the required storage space for the virtual service data and backups.

- Performance Delivery: To support the required number of IOPS and throughput.

- Fault Tolerance: To provide the desired level of protection against hardware failures. If a SAN is used, this may include redundant HBA and switches.

- Manageability: To provide a high degree of platform self-management. This requires a programmatic interface to provide automated configuration and firmware upgrades.

Additionally, a private cloud must meet the following requirements to make sure that it is highly available and well-managed:

- Multiple paths to the disk array for redundancy. Should a disk fail, hot or warm spare disks can provide resiliency in the provisioned storage. Consult the storage vendor for specific recommendations.

- A storage system with automatic data recovery, to allow an automatic background process to rebuild data onto a spare or replacement disk drive when another disk drive in the array fails.

- Redundant power supplies and cooling fans, to increase the number of faults the Storage Array can withstand.

Network

The Private CLoud Reference Architecture assumes that the network presented to servers is not redundant for the Standard Environment and is redundant for the High-Availability Environment.

The network is tightly coupled with physical servers. Each Compute Resource Pool includes the network switches necessary for the servers to operate; each Scale Unit includes a pre-defined and fixed number of servers and switches.

The switches must be monitored to make sure no workloads saturate the network. A private cloud is designed as a general-purpose infrastructure. Workloads that challenge the network with high utilization may not be good candidates for a private cloud unless separate Resource Pools are created specifically to handle these workloads.

Switches are members of network upgrade domains, but the definition and membership of upgrade domains will likely vary depending on the nature of the upgrade. If switches are not redundant (for example, in the Standard Environment), the whole Resource Pool will need to be taken offline for switch maintenance, which requires switch reboots.

Network hardware (switches and load balancers) must display an API to Fabric Management that enables automated management of networks such as creation of VLANs, Virtual IP addresses (VIP), and addition or removal of hosts from the VIP.

Physical Network

Some key decisions that should be made to increase the bandwidth of the physical networks are related to the use of Live Migration requirements of port security, and the need for link aggregation. Here is a table showing the benefits and trade-offs of using Live Migration:

|

Benefit

|

Trade-off

|

Benefit

|

Trade-off

|

- Transparent movement of Stateful applications

- Transparent infrastructure upgrades

|

- Additional network switch ports will be required

- More network adapters are required per virtualization host

- Greater Reserve Capacity may be required because of cluster size limitations of 16 nodes

|

- Less switch ports are required

- Fewer network adapters are required per virtualization host

- Ideal for stateless applications

|

- No transparent movement of Stateful applications

- For Stateful applications, infrastructure upgrades will need to be coordinated with VM owners

|

To support the dynamic characteristics of a private cloud, a network switch should support a remote programmatic interface – for firmware upgrades, and prioritization of traffic for quality of service. These switches should be dedicated for a private cloud to maintain predictable performance and to minimize risks associated with human interaction. As defined earlier, the servers need to be connected to at least two networks, management and consumer, with live migration (if required). The connections should always be the same; for example, network adapter 1 to management, network adapter 2 to consumer, and network adapter 3 to Live Migration.

If iSCSI is chosen for the storage interconnects, iSCSI traffic should reside in an isolated VLAN in order to maintain security and performance levels. This iSCSI traffic should not share a network adaptor with other traffic, for example the management or consumer network traffic.

The interconnect speeds between switches should be evaluated to determine the maximum bandwidth for communications. This could affect the maximum number of hosts which can be placed on each switch.

When designing network connectivity for a well-managed infrastructure, the virtualization hosts should have the following specific networking requirements:

- Support for 802.1Q VLAN Tagging: To provide network segmentation for the virtualization hosts, supporting management infrastructure and workloads. This is the preferred method to help secure and isolate data traffic for a private cloud.

- Remote Out-of-band Management Capability: To monitor and manage servers remotely over the network regardless of whether the server is turned on or off.

- Support for PXE Version 2 or Later: To facilitate automated server provisioning.

To dynamically initiate remediation events in response to the failure or impending failure of network switch components, each switch is required to display warnings, errors, and state information for the following:

- CPU

- Flash Memory

- Interface Details

- Port State

- Port Errors

- Bandwidth Utilization

- Power Supply

- State

- Active / Passive

- Power Output Variations

- Fans

Storage Switch/Subsystem Health Model

To dynamically initiate remediation events in response to either the failure or impending failure of storage switches and storage subsystem components, each component is required to display warnings, errors, and state information for the following:

Storage Switch

- CPU

- Flash Memory

- Interface Details

- Port State

- Port Errors

- Bandwidth Utilization

- Power Supply

- State

- Active / Passive

- Power Output Variation

- Fans

Storage Subsystem

- CPU

- Flash Memory

- Service Processor

- Disks

- Read / Write Failures

- Predictive Failures

- Power Supply

- State

- Active / Passive

- Power Output Variations

- Fans

Hypervisor

The hypervisor exposes the VM services to consumers. It needs to be configured identically on all hosts in a Resource Pool, and ideally all hosts in the private cloud. Fabric Management will orchestrate the addition of virtual switches, machines, and disks.

An architect needs to decide whether the private cloud should use CPU Resource Reservations to make sure of predictable performance of VMs. This table lists the benefits and trade-offs:

|

Benefit

|

Trade-off

|

Benefit

|

Trade-off

|

- Consistent VM performance for consumers

|

- Fixed number of VMs per host might lead to low utilization of resources

- Toolset may not set resource reservations

|

- Variable number of VMs per host means resource utilization can be maximized

|

- Consumers do not experience consistent VM performance

- One VM can adversely affect the processing performance of others

|

The decision is driven by whether efficiency or consistency is more important for the private cloud.

The architect could elect to provide different classes of services – one which uses resource reservations to deliver predictability, and another which shares the resources. Separate Resource Pools could be deployed accordingly, along with differential pricing to incent the consumers to exhibit desired behavior.

Resource reservations will not prevent a host from saturating the network and crippling the performance of other hosts. As stated in the Network section earlier, this needs monitoring.

Parent Partition

The parent partition provides the hypervisor with access to physical resources such as network and storage. It also hosts the hypervisor management interfaces. The parent partition needs to be configured identically on all servers in a Resource Pool.

If an architect elects to create a service classification which depends on consuming LUNs directly (not via the parent partition), the parent partition must be configured to present the pass-through for this storage. Further, this storage must be available to all parent partitions in that Resource Pool to enable VM portability between hosts.

The parent partition displays health information for the server, the parent partition operating system, and the hypervisor. The health monitoring system, in turn, consumes this information to enable Capacity Management and Fabric Management.

Management Layers

Task Execution

Task execution is the low level management operations that can be performed on a platform and generally are surfaced through the command line or Application Programming Interface (API). The capability to execute tasks must not only exist but the usage semantics should be consistent across members of a fault domain to enable automation using a common format. When differences in semantics exist this forces the automation layer to compensate for these differences through custom code in the orchestration or even require using different execution hosts or engines within a fault domain.

Automation

The automation layer is made up of the foundational automation technology plus a series of single purpose commands and scripts that perform operations such as starting or stopping a virtual machine, restarting a server, or applying a software update. These atomic units of automation are combined and executed by higher-level management systems. The modularity of this layered approach dramatically simplifies development, debugging, and maintenance.

Orchestration

In much the same way that an enterprise resource planning (ERP) system manages a business process such as order fulfillment and handles exceptions such as inventory shortages, the orchestration layer provides an engine for IT-process automation and workflow. The orchestration layer is the critical interface between the IT organization and its infrastructure and transforms intent into workflow and automation.

Ideally, the orchestration layer provides a graphical user interface in which complex workflows that consist of events and activities across multiple management-system components can be combined, to form an end-to-end IT business process such as automated patch management or automatic power management. The orchestration layer must provide the ability to design, test, implement, and monitor these IT workflows.

Service Management

Service management provides the means for automating and adapting IT service management best practices, such as those found in the IT Infrastructure Library (ITIL), to provide built-in processes for incident resolution, problem resolution, and change control.

Self Service

Self Service capability is a characteristic of private cloud computing and must be present in any implementation. The intent is to permit users to approach a self-service capability and be presented with options available for provisioning in an organization. The capability may be basic where only provisioning of virtual machine with a pre-defined configuration or may be more advanced allowing configuration options to the base configuration and leading up to a platform capability or service.

Self service capability is a critical business driver that enables members of an organization to become more agile in responding to business needs with IT capabilities to meet those needs in a manner that aligns and conforms with internal business IT requirements and governance.

This means the interface between IT and the business are abstracted to simple, well defined and approved set of service options that are presented as a menu in a portal or available from the command line. The business selects these services from the catalog, begins the provisioning process and notified upon completions, the business is then only charged for what they actually use.

This is analogous to capability available on Public Cloud platforms.

The entities that consume self service capabilities in an organization are individual business units, project teams, or any other department in the organization that have a need to provision IT resources. These entities are referred to as Tenants. In a private cloud tenants are granted the ability to provision compute and storage resources as they need them to run their workload. Connectivity to these resources is managed behind the scenes by the fabric management layers of the private cloud.

Tenant administrators are granted access to a self-service portal where they can initiate workflows to provision virtualized services in the appropriate configuration and capacity. For example compute resources may be available in small, medium or large instance capacities and also storage of the appropriate size and performance characteristics. Resources are provisioned without any intervention from infrastructure personnel in IT and the overall progress is tracked and reported by the fabric management layer and reported through the portal.

A chargeback model is defines how tenants will be charged for using the cloud resources. This is typically the numbers and size of resources provisioned times the amount of time they are provisioned for. This information is available to tenant administrators through the self-service portal and well as the ability to provide cost reporting.

Tenants are granted the ability to manage, monitor and report on the resources that they have provisioned.

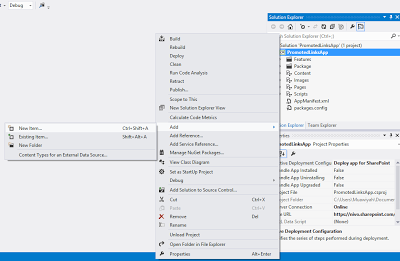

![DuetEnterpriseDesign[1]](https://sharepointsamurai.files.wordpress.com/2014/10/duetenterprisedesign1.jpg?w=479&h=238)

Input the site URL which we need to open:

Input the site URL which we need to open: Enter your site credentials here:

Enter your site credentials here: Now we need to create the new external content type and here we have the options for changing the name of the content type and creating the connection for external data source:

Now we need to create the new external content type and here we have the options for changing the name of the content type and creating the connection for external data source: And click on the hyperlink text “Click here to discover the external data source operations, now this window will open:

And click on the hyperlink text “Click here to discover the external data source operations, now this window will open: Click on the “Add Connection “button, we can create a new connection. Here we have the different options to select .NET Type, SQL Server, WCF Service.

Click on the “Add Connection “button, we can create a new connection. Here we have the different options to select .NET Type, SQL Server, WCF Service. Here we selected SQL server, now we need to provide the Server credentials:

Here we selected SQL server, now we need to provide the Server credentials: In this screen, we have the options for creating different types of operations against the database:

In this screen, we have the options for creating different types of operations against the database: Click on the next button:

Click on the next button: Parameters Configurations:

Parameters Configurations: Options for Filter parameters Configuration:

Options for Filter parameters Configuration: Here we need to add new External List, Click on the “External List”:

Here we need to add new External List, Click on the “External List”: Select the Site here and click ok button:

Select the Site here and click ok button: Enter the list name here and click ok button:

Enter the list name here and click ok button: After that, refresh the SharePoint site, we can see the external list here and click on the list:

After that, refresh the SharePoint site, we can see the external list here and click on the list: Here we have the error message “Access denied by Business Connectivity.”

Here we have the error message “Access denied by Business Connectivity.” Click on the Business Data Connectivity Service:

Click on the Business Data Connectivity Service: Set the permission for this list:

Set the permission for this list: Click ok after setting the permissions:

Click ok after setting the permissions: After that, refresh the site and hope this will work… but again, it has a problem. The error message like Login failed for user “NT AUTHORITY\ANONYMOUS LOGON”.

After that, refresh the site and hope this will work… but again, it has a problem. The error message like Login failed for user “NT AUTHORITY\ANONYMOUS LOGON”. Then follow the below mentioned steps.

Then follow the below mentioned steps. And there is a chance for one more error like:

And there is a chance for one more error like:

![sap_integration_en_round[1]](https://sharepointsamurai.files.wordpress.com/2014/09/sap_integration_en_round1.jpg?w=300&h=155)

Note

Note![sap_integration_en_round[2]](https://sharepointsamurai.files.wordpress.com/2014/09/sap_integration_en_round2.jpg?w=300&h=155)

Important

Important

DNS is a huge part of the inner workings of the internet. spend a considerable amount of man hours a year ensuring the sites they build are fast and respond well to user interaction by setting up expensive CDN’s, recompressing images, minifying script files and much more – but what a lot of us don’t understand is that DNS server configuration can make a big difference to the speed of your site – hopefully at the end of this post you’ll feel empowered to get the most out of this part of your website’s configuration.

DNS is a huge part of the inner workings of the internet. spend a considerable amount of man hours a year ensuring the sites they build are fast and respond well to user interaction by setting up expensive CDN’s, recompressing images, minifying script files and much more – but what a lot of us don’t understand is that DNS server configuration can make a big difference to the speed of your site – hopefully at the end of this post you’ll feel empowered to get the most out of this part of your website’s configuration.

![bottlenecks[1]](https://sharepointsamurai.files.wordpress.com/2014/09/bottlenecks1.jpg?w=300&h=196)

![SharePointOnline2L-1[2]](https://sharepointsamurai.files.wordpress.com/2014/08/sharepointonline2l-12.png?w=300&h=108)

![8322.sharepoint_2D00_2010_5F00_4855E582[1]](https://sharepointsamurai.files.wordpress.com/2014/07/8322-sharepoint_2d00_2010_5f00_4855e5821.jpg?w=474&h=261)

Tip:

Tip:  Note:

Note:

![activity diagram uml+activity+diagram+library+mgmt+book+return[1]](https://sharepointsamurai.files.wordpress.com/2014/06/umlactivitydiagramlibrarymgmtbookreturn1-e1403235154656.jpg?w=474)

![ImageGen[1]](https://sharepointsamurai.files.wordpress.com/2014/06/imagegen1.png?w=474)

![5810.image-1[1]](https://sharepointsamurai.files.wordpress.com/2014/06/5810-image-11.png?w=474&h=365)

+

+ ![microsoft_crm_wallpaper_02[1]](https://sharepointsamurai.files.wordpress.com/2014/06/microsoft_crm_wallpaper_021.jpg?w=167&h=125)

![ImageGen[1]](https://sharepointsamurai.files.wordpress.com/2014/06/imagegen1.png?w=168&h=174)

![clip_image001[6] clip_image001[6]](https://i0.wp.com/blogs.technet.com/cfs-file.ashx/__key/communityserver-blogs-components-weblogfiles/00-00-00-46-82-metablogapi/2234.clip_5F00_image0016_5F00_thumb_5F00_7D89F302.jpg)

One of the core concepts of Business Connectivity Services (BCS) for

One of the core concepts of Business Connectivity Services (BCS) for

![saas[1]](https://sharepointsamurai.files.wordpress.com/2014/05/saas1.jpg?w=300&h=195)

![sap2[1]](https://sharepointsamurai.files.wordpress.com/2014/05/sap21.jpg?w=300&h=148)

![PrimForWindowsRuntime[1]](https://sharepointsamurai.files.wordpress.com/2014/05/primforwindowsruntime1.png?w=474&h=307)

![designmanager[1]](https://sharepointsamurai.files.wordpress.com/2014/03/designmanager1.png?w=300&h=169)